Introduction

This article describes the issues that are fixed in Update Rollup 8 (UR8) for Microsoft System Center 2012 R2 Virtual Machine Manager. There are two updates available for System Center 2012 R2 Virtual Machine Manager: one for servers and one for the Administrator Console. This article also contains the installation instructions for Update Rollup 8 for System Center 2012 R2 Virtual Machine Manager.

Features that are added in this update rollup

-

Support for SQL Server 2014 SP1 as VMM databaseWith Update Rollup 8 for SC VMM 2012 R2 you can now have Microsoft SQL Server 2014 SP1 as the VMM database. This support does not include deploying service templates by using the SQL profile type as SQL Server 2014 SP1. For the latest information about SQL Server requirements for System Center 2012 R2, see the reference here.

-

Support for VMWare vCenter 6.0 management scenariosWith Update Rollup 7, we announced support for management scenarios for vCenter 5.5. Building on our roadmap for vCenter and VMM integration and supportability, we are now excited to announce support for VMWare vCenter 6.0 in Update Rollup 8. For a complete list of supported scenarios, click here.

-

Ability to set quotas for external IP addressesWith Update Rollup 7, we announced support for multiple external IP addresses per virtual network, but the story was incomplete, as there was no option to set quotas on the number of NAT connections. With UR8, we are glad to announce end-to-end support for this functionality, as you can now set quotas on the number of external IP addresses allowed per user role. You can also manage this by using Windows Azure Pack (WAP).How do I use this functionality?PowerShell cmdlets:

To set the quota for a user role:

Set-UserRole –UserRole UserRoleObject –NATConnectionMaximum MaxNumberTo remove the quota for a user role:

Set-UserRole –UserRole UserRoleObject –RemoveNATConnectionMaximumSample cmdlets:

Set-UserRole –UserRole $UserRoleObject –NATConnectionMaximum 25Set-UserRole –UserRole $UserRoleObject –RemoveNATConnectionMaximum

-

Support for quotas for checkpointsBefore UR8, when you create a checkpoint through WAP, VMM does not check whether creating the checkpoint will exceed the tenant storage quota limit. Before UR8, tenants can create the checkpoint even if the storage quota limit will be exceeded.Sample scenario to explain the issue before UR8Consider a case in which a tenant administrator has a storage quota limit of 150 GB. Then, she follows these steps:

-

She creates two VMs of VHD size 30 GB each (available storage before creation: 150 GB, post-creation: 90 GB).

-

She creates a checkpoint for one of the VMs (available storage before creation: 90 GB, post-creation: 60 GB).

-

She creates a new VM of VHD size 50 GB (available storage before creation: 60 GB, post-creation: 10 GB).

-

She creates a checkpoint of the third VM (available storage before creation: 10 GB).

Because the tenant administrator has insufficient storage quota left, VMM should, ideally, block the creation of this checkpoint (step 4). But before UR8, VMM lets tenant administrators create the checkpoint and exceed the limit.With Update Rollup 8, you can now rest assured that VMM will handle checking the tenant’s storage quota limits before you create a checkpoint.

-

-

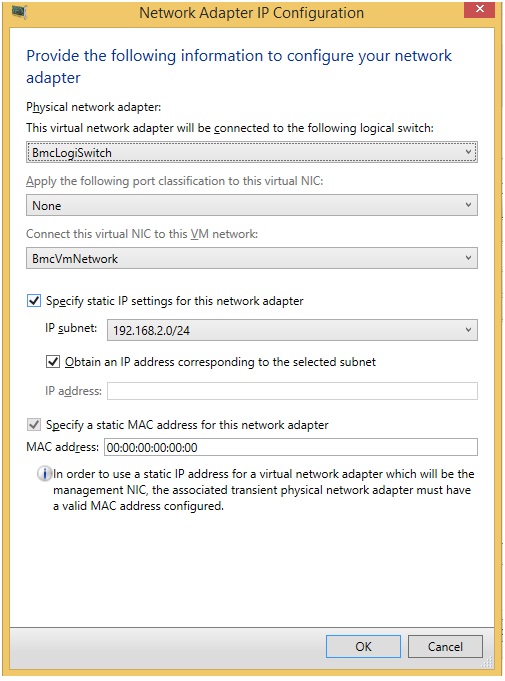

Ability to configure static network adapter MAC address during operating system deploymentWith Update Rollup 8, we now provide the functionality to configure static network adapter MAC addresses during operating system deployment. If you have ever done Bare Metal provisioning of hosts and ended up having multiple hosts with the same MAC addresses (because of dynamic IP address assignment for network adapters), this could be a real savior for you.How do I use this functionality?PowerShell cmdlet:

New-SCPhysicalComputerNetworkAdapterConfig -UseStaticIPForIPConfiguration -SetAsGenericNIC -SetAsVirtualNetworkAdapter -IPv4Subnet String -LogicalSwitch Logical_Switch -VMNetwork VM_Network -MACAddress MAC_Address

To configure a static MAC address, you can specify the MACAddress parameter in the previous PowerShell cmdlet. If no MAC address parameter is specified, VMM configures it as dynamic (VMM allocates Hyper-V to assign the MAC Address.). To choose MAC from the default VMM MAC address pool, you can specify the MAC address as 00:00:00:00:00:00., as in the following screen shot:

-

Ability to deploy extended Hyper-V Port ACLsWith Update Rollup 8 for VMM, you can now:

-

Define ACLs and their rules

-

Attach the ACLs created to a VM network, VM subnets, or virtual network adapters

-

Attach the ACL to global settings that apply it to all virtual network adapters

-

View and update ACL rules configured on the virtual network adapter in VMM

-

Delete port ACLs and ACL rules

For more information, see Knowledge Base article 3101161.

-

-

Support for storage space tiering in VMMWith Update Rollup 8, VMM now provides you the functionality to create file shares with tiers (SSD/HDD).For more information, see Knowledge Base article 3101159.

Issues that are fixed in this update rollup

-

Issue 1Creation of Generation 2 VMs fails with the following error:

Error (13206) Virtual Machine Manager cannot locate the boot or system volume on virtual machine <VM name>. The resulting virtual machine might not start or operate properly.

-

Issue 2VMM does not let you set the owner of a hardware profile with an owner name that contains the "$" symbol.

-

Issue 3HA VMs with VLAN configured on the network sites of a logical network cannot be migrated from one host to another. The following error is thrown when you try to migrate the VM:

Error (26857)The VLAN ID (xxx) is not valid because the VM network (xxx) does not include the VLAN ID in a network site accessible by the host.

-

Issue 4The changes that are made by a tenant administrator (with deploy permissions to a cloud) to the Memory and CPU settings of a VM in the cloud through VMM Console do not stick. To work around this issue, change these settings by using PowerShell.

-

Issue 5When a VM is deployed and put on an SMB3 file share that's hosted on NetApp filer 8.2.3 or later, the VM deployment process leaves a stale session open per VM deployed to the share. When many VMs are deployed by using this process, VM deployment starts to fail as the max limit of the allowed SMB session on the NetApp filer is reached.

-

Issue 6VMM hangs because of SQL Server performance issues when you perform VMM day-to-day operations. This issue occurs because of stale entries in the tbl_PCMT_PerfHistory_Raw table. With UR8, new stale entries are not created in the tbl_PCMT_PerfHistory_Raw table. However, the entries that existed before installation of UR8 will continue to exist. To delete the existing stale entries from the SQL Server tables, use the following SQL script:

DELETE FROM tbl_PCMT_PerfHistory_Raw where TieredPerfCounterID NOT IN (SELECT DISTINCT tieredPerfCounterID FROM dbo.tbl_PCMT_TieredPerfCounter);DELETE FROM tbl_PCMT_PerfHistory_Hourly where TieredPerfCounterID NOT IN (SELECT DISTINCT tieredPerfCounterID FROM dbo.tbl_PCMT_TieredPerfCounter);DELETE FROM tbl_PCMT_PerfHistory_Daily where TieredPerfCounterID NOT IN (SELECT DISTINCT tieredPerfCounterID FROM dbo.tbl_PCMT_TieredPerfCounter);

-

Issue 7In a deployment with virtualized Fiber Channel adapters, VMM does not update the SMI-S storage provider, and it throws the following exception:

Name : Reads Storage ProviderDescription : Reads Storage ProviderProgress : 0 %Status : FailedCmdletName : Read-SCStorageProviderErrorInfo : FailedtoAcquireLock (2606)

This occurs because VMM first obtains the Write lock for an object and then later tries to obtain the Delete lock for the same object.

-

Issue 8For VMs with VHDs that are put on a Scale out File Server (SOFS) over SMB, the Disk Read Speed VM performance counter incorrectly displays zero in the VMM Admin Console. This prevents an enterprise from monitoring its top IOPS consumers.

-

Issue 9Dynamic Optimization fails, leaks a transaction, and prevents other jobs from executing. It is blocked on the SQL Server computer until SCVMM is recycled or the offending SPID in SQL is killed.

-

Issue 10V2V conversion fails when you try to migrate VMs from ESX host to Hyper-V host if the hard disk size of the VM on the ESX host is very large. The following mentioned error is thrown:

Error (2901)The operation did not complete successfully because of a parameter or call sequence that is not valid. (The parameter is incorrect (0x80070057))

-

Issue 11Live migration of VMs in an HNV network takes longer than expected. You may also find pings to the migrating VM are lost. This is because during the live migration, the WNV Policy table is transferred (instead of only delta). Therefore, if the WNV Policy table is too long, the transfer is delayed and may cause VMs to lose connectivity on the new host.

-

Issue 12VMM obtains a wrong MAC address while generating the HNV policy in the deployments where F5 Load Balancers are used.

-

Issue 13For IBM SVC devices, enabling replication fails in VMM because there is a limitation in SVC in which the name of the consistency group should start with an alphabetical character (error code: 36900). This issue occurs because while enabling replication, VMM generates random strings for naming the “consistency groups” and “relationship” between the source and the target, and these contain alphanumeric characters. Therefore, the first character that's generated by VMM may be a number, and this breaks the requirement by IBM SVC.

-

Issue 14In Update Rollup 6, we included a change that lets customers have a static MAC address even if the network adapter is not connected. This fix did not cover all scenarios correctly, and it triggers an exception when there's a template with a connected network adapter, and then you later try to edit the static address in order to disconnect the network adapter.

-

Issue 15Post Update Rollup 6, as soon as a host goes into legacy mode, it does not come back to eventing for 20 days. Therefore, the VM properties are not refreshed, and no events are received from HyperV for 20 days.This issue occurs because of a change that's included in UR6 that set the expiry as 20 days for both eventing mode and legacy mode. The legacy refresher, which should ideally run after 2 minutes, now runs after 20 days; and until then, eventing is disabled.Workaround:To work around this issue, manually run the legacy refresher by refreshing VM properties.

-

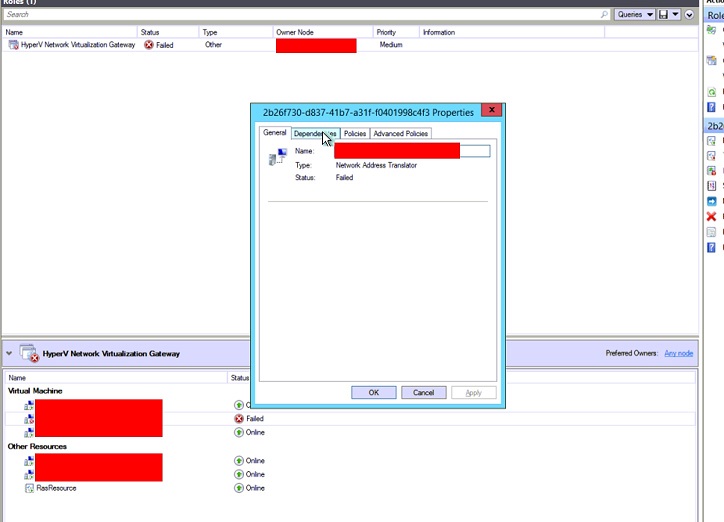

Issue 16Post-UR7, deleting a virtual network does not correctly clean up the cluster resources for the Network Virtualization Gateway. This causes the cluster role (cluster group) to go into a failed state when a failover of the HNV gateway cluster role occurs. This causes a failure status on the gateway, as in the following screen shot:

How to obtain and install Update Rollup 8 for System Center 2012 R2 Virtual Machine Manager

Download information

Update packages for Virtual Machine Manager are available from Windows Update or by manual download.

Windows Update

To obtain and install an update package from Windows Update, follow these steps on a computer that has a Virtual Machine Manager component installed:

-

Click Start, and then click Control Panel.

-

In Control Panel, double-click Windows Update.

-

In the Windows Update window, click Check Online for updates from Microsoft Update.

-

Click Important updates are available.

-

Select the update rollup package, and then click OK.

-

Click Install updates to install the update package.

Microsoft Update Catalog

Go to the following websites to manually download the update packages from the Microsoft Update Catalog:

To manually install the update packages, run the following command from an elevated command prompt:

msiexec.exe /update packagenameFor example, to install the Update Rollup 8 package for a System Center 2012 Virtual Machine Manager SP1 server (KB3096389), run the following command:

msiexec.exe /update kb3096389_vmmserver_amd64.mspNote Performing an update to Update Rollup 8 on the VMM server requires installing both the VMM Console and Server updates. Learn how to install, remove, or verify update rollups for Virtual Machine Manager 2012 R2.

Files updated in this update rollup

For a list of the files that are changed in this update rollup, click here.